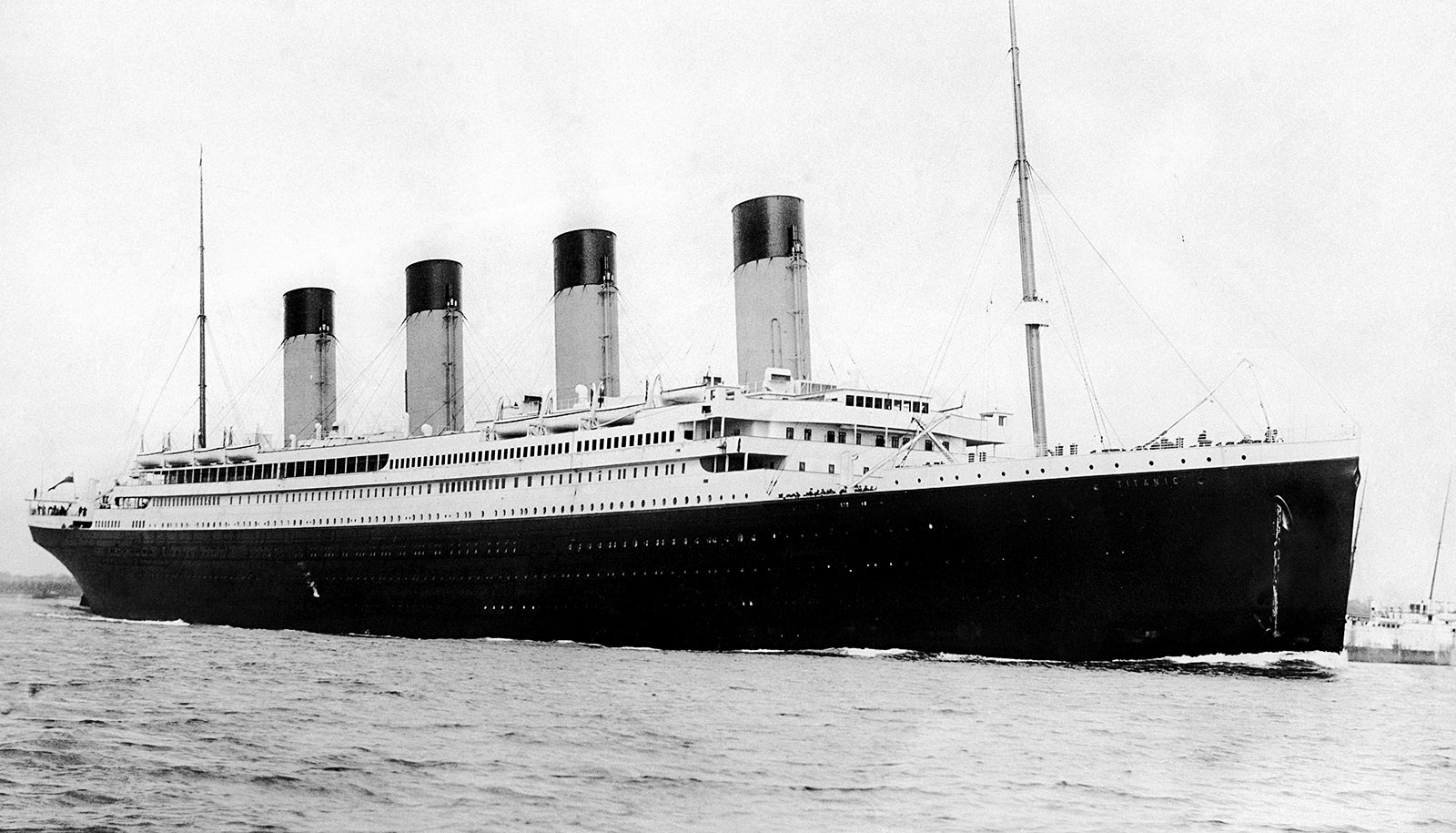

An algorithm that can predict which passengers survived the 1912 Titanic disaster with 97 percent accuracy demonstrates the power and the shortcomings of artificial intelligence, a new book argues.

AI may get things right, this finding shows, but for all the wrong reasons.

Meredith Broussard, a journalism professor at New York University, outlines this paradox in her new book Artificial Unintelligence: How Computers Misunderstand the World (MIT Press, 2018). Broussard takes the reader on a series of unconventional adventures in computer programming, including an up-close view of what it really looks like to “do” artificial intelligence.

The Titanic calculation takes into account several variables—such as gender, age, and fare class—in creating a method to predict which passengers survived and which perished. In addition to performing with 97-percent accuracy, the algorithm revealed that the fare a passenger paid was the most important factor in determining whether a passenger survived the Titanic disaster—those in first class were more likely to survive than were those in second or third class.

“Math people are often surprised by this; women and poor and minority people are not surprised by this.”

Despite the relative precision of this computer tool, however, Broussard notes that “our statistical prediction of who survived and who died on the Titanic will never be 100 percent accurate—no statistical prediction can or will ever be 100 percent accurate—because human beings are not and never will be statistics.” For example, she recounts the actions of two passengers whose fates had nothing to do with gender, age, or passenger fare, but, rather, how far they jumped when fleeing the sinking vessel.

The distinction is vital, she observes, and offers a cautionary note in today’s world—which is of course more technology- and data-driven than the one that existed in 1912.

“Our Titanic model could be used to justify charging first-class passengers less for travel insurance, but that’s absurd: we shouldn’t penalize people for not being rich enough to travel first class,” writes Broussard, an assistant professor in NYU’s Arthur L. Carter Journalism Institute and a former computer scientist at AT & T Bell Labs and MIT Media Lab.

“Most of all, we should know by now that there are some things machines will never learn and that human judgment, reinforcement, and interpretation is always necessary.”

Through her Titanic example, Broussard addresses the growth of technochauvinism—the belief that technology is always the solution—and argues that the digital age will not solve persistent social problems. In fact, she posits, machine learning may exacerbate them.

“If we make pricing algorithms based on what the world looks like, women and poor and minority customers inevitably get charged more,” she observes.

How artificial intelligence can teach itself slang

“Math people are often surprised by this; women and poor and minority people are not surprised by this. Race, gender, and class influence pricing in a variety of obvious and devious ways. Women are charged more than men for haircuts, dry cleaning, razors, and even deodorant. Asian-Americans are twice as likely to be charged more for SAT prep courses. African-American restaurant servers make less in tips than white colleagues.”

What we need, she concludes, are not new and improved technologies, but, instead, an understanding of the limits of what we can do with technology. In doing so, we can make better choices about what we should do with it to make the world better for everyone.

Source: New York University