New research digs into how our brains try to tell the difference between music and speech.

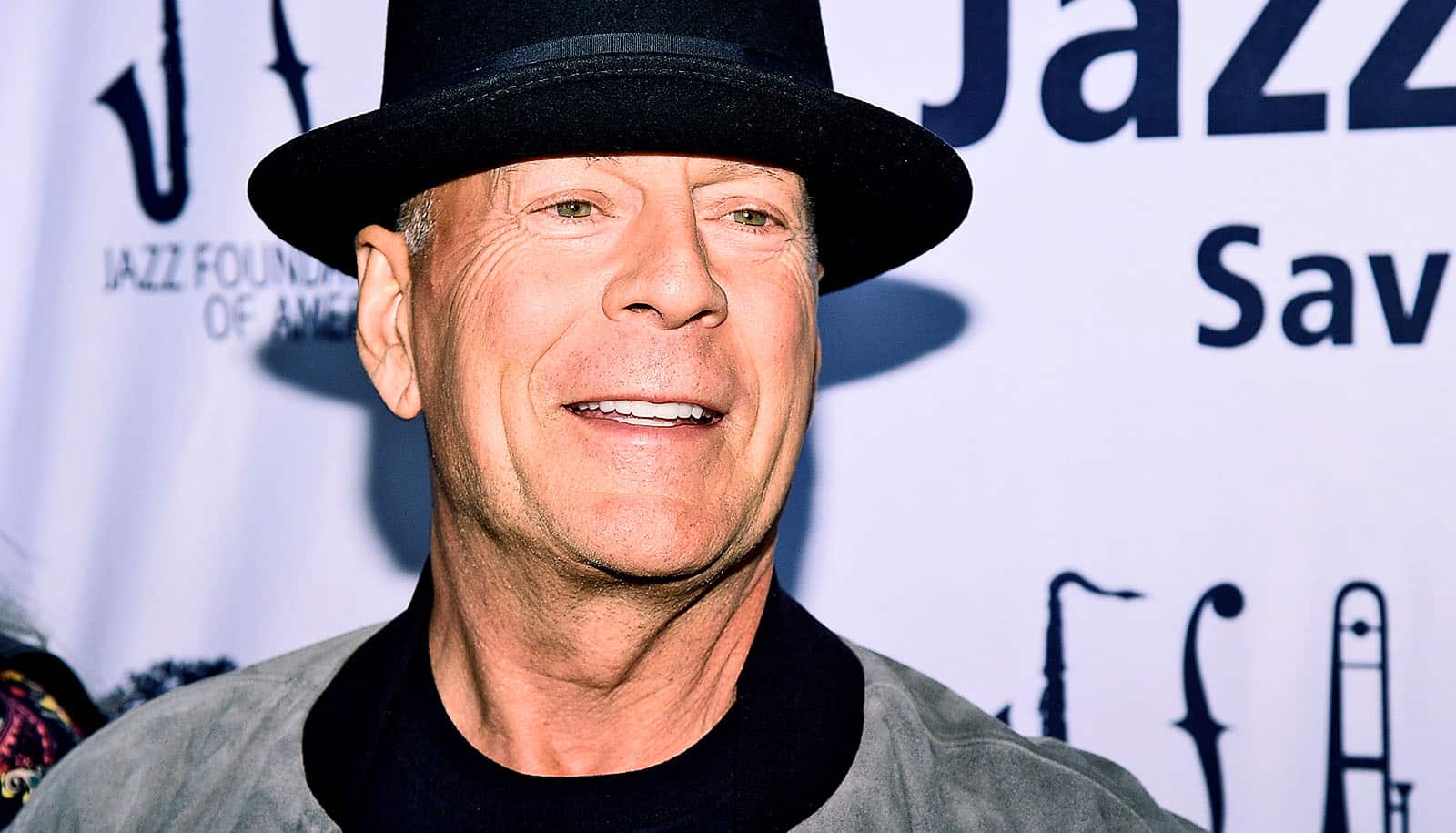

Researchers mapped out this process through a series of experiments—yielding insights that offer a potential means to optimize therapeutic programs that use music to regain the ability to speak in addressing aphasia. This language disorder afflicts more than 1 in 300 Americans each year, including Wendy Williams and Bruce Willis.

“Although music and speech are different in many ways, ranging from pitch to timbre to sound texture, our results show that the auditory system uses strikingly simple acoustic parameters to distinguish music and speech,” says Andrew Chang, a postdoctoral fellow in New York University’s psychology department and the lead author of the paper in the journal PLOS Biology.

“Overall, slower and steady sound clips of mere noise sound more like music while the faster and irregular clips sound more like speech.”

Scientists gauge the rate of signals by precise units of measurement: Hertz (Hz). A larger number of Hz means a greater number of occurrences (or cycles) per second than a lower number. For instance, people typically walk at a pace of 1.5 to 2 steps per second, which is 1.5-2 Hz. The beat of Stevie Wonder’s 1972 hit Superstition is approximately 1.6 Hz, while Anna Karina’s 1967 smash Roller Girl clocks in at 2 Hz. Speech, in contrast, is typically two to three times faster than that at 4-5 Hz.

It has been well documented that a song’s volume, or loudness, over time—what’s known as “amplitude modulation”—is relatively steady at 1-2 Hz. By contrast, the amplitude modulation of speech is typically 4-5 Hz, meaning its volume changes frequently.

Despite the ubiquity and familiarity of music and speech, scientists previously lacked clear understanding of how we effortlessly and automatically identify a sound as one or the other.

To better understand this process in their study, Chang and colleagues conducted a series of four experiments in which more than 300 participants listened to a series of audio segments of synthesized music-like and speech-like noise of various amplitude modulation speeds and regularity.

The audio noise clips allowed only the detection of volume and speed. The participants were asked to judge whether these ambiguous noise clips, which they were told were noise-masked music or speech, sounded like.

Observing the pattern of participants sorting hundreds of noise clips as either music or speech revealed how much each speed and/or regularity feature affected their judgment between music and speech. It is the auditory version of “seeing faces in the cloud,” the scientists conclude: If there’s a certain feature in the soundwave that matches listeners’ idea of how music or speech should be, even a white noise clip can sound like music or speech. Examples of both music and speech may be downloaded from the research page.

The results showed that our auditory system uses surprisingly simple and basic acoustic parameters to distinguish music and speech: to participants, clips with slower rates (<2Hz) and more regular amplitude modulation sounded more like music, while clips with higher rates (~4Hz) and more irregular amplitude modulation sounded more like speech.

Knowing how the human brain differentiates between music and speech can potentially benefit people with auditory or language disorders such as aphasia, the authors note. Melodic intonation therapy, for instance, is a promising approach to train people with aphasia to sing what they want to say, using their intact “musical mechanisms” to bypass damaged speech mechanisms. Therefore, knowing what makes speech and music similar or distinct in the brain can help design more effective rehabilitation programs.

Additional coauthors are from NYU, Chinese University of Hong Kong, and National Autonomous University of Mexico (UNAM.

The National Institute on Deafness and Other Communication Disorders, part of the National Institutes of Health and Leon Levy Scholarships in Neuroscience funded the work.

Source: NYU